Application Assurance

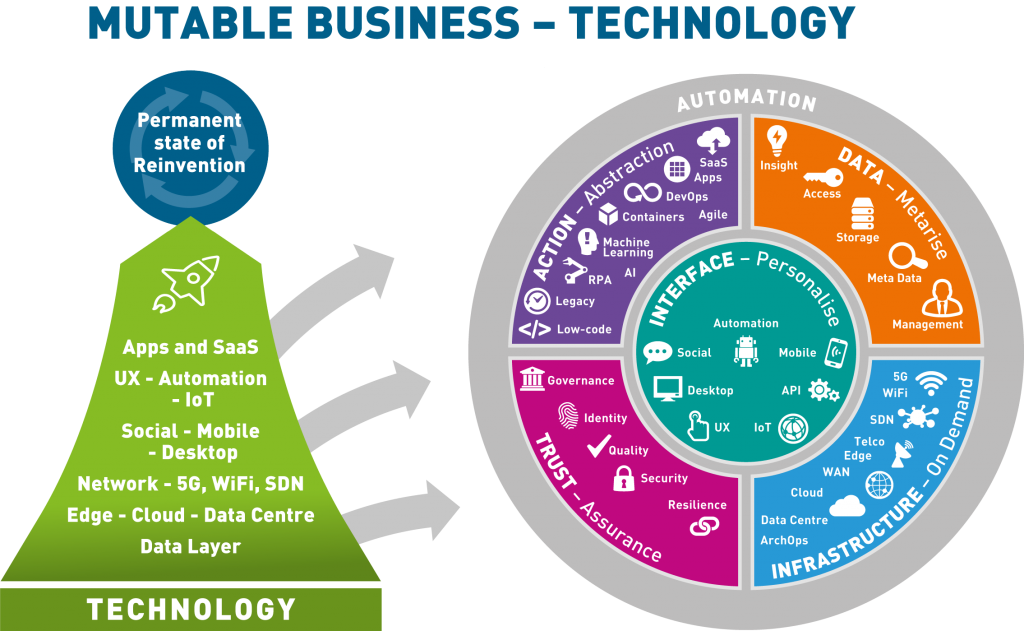

Application Assurance is ultimately about having the culture, processes and technologies that makes sure that Outcome Automation delivers business outcomes all of the stakeholders in a business can trust – employees, customers and regulators, as well as management and shareholders. Good Governance is expected of any commercial organisation.

There are multiple aspects of this:

- Asset Identification. Unless you can identify and manage all the assets essential to delivering business outcomes, Application Assurance cannot be complete. The relationship of Application Assets to Business Outcomes on one side and to Data Assets on the other must be known.

- Application Classification in business terms, so that you can address applications within a business structure and all stakeholders can communicate.

- Application Prioritisation, so that limited resources can be allocated cost-effectively to applucation and rislk management and impact analysis are possible

- Application Validation, the verification that the outcomes and behaviours of applications are as you expect, with no surprises. These days, this implies a DevOps continuous build, continuous integration, continuous delivery process, with automated validation throughout. Integration testing includes validation of external links and API quality/security (which may involve Service Virtualisation).

Only the last of these fits with what is usually thought of as application testing. Automation of testing and test data management (TDM) is still necessary, but not sufficient; and the complexity of modern asynchronous web-based applications means that testing is increasingly stochastic in nature. Augmented Intelligence (AI) and Machine Learning have an increasing part to play in managing the complexites involved in assuring software quality, according to Eran Kinsbruner (Chief Evangelist at Perfecto, part of Perforce Software).

Point 1 is, essentially, Configuration Management, a high-maturity process which includes version control, but is a lot more than just that – and make sure that open source software assets are included (increasingly, business applications will depend on open source assets). Take advantage of automation platforms such as Github or digital.ai (for example) wherever possible.

Asset Identification

Unless you can identify and manage all the assets essential to delivering Business Outcomes, Application Assurance cannot be complete. The relationship of Application Assets to Business Outcomes on one side and to Data Assets and Infrastructure Assets on the other must be known, in order to claim full Application Assurance.

Understanding physical Data and Infrastructure Assets is reasonably well understood – just don’t rely on automatic discovery, at least until you have built confidence in it. Some assets may not be connected to the network you are using for discovery, older versions of assets may not be recognised and your identification algorithms may be flawed. Subject automatic to regular “sanity checks” by, at least, talking to the people working in the area and by conducting the occasional visual check. Take advantage of automated asset discovery and management packages – and make sure that they can handle virtual assets and cloud-based “Assets-as-a-Service.

Understanding business outcomes is a matter of more or less formal business requirements analysis. This involves more than just documenting the business’ wish list and needn’t be a formal document: a photo of a whiteboard or a set of yellow post-it notes may well be enough. Business requirements must be reviewed – tested, validated – for consistency and accuracy, prioritised (so that, for example, “minimum viable product” can be identified, and agreed with all stakeholders. Beware of confusing technology requirements with business requirements; and beware of the possible gap between what the CEO thinks does or should happen and what really goes on the ground.

There are many commercial and Open Source tools to automate Asset Discovery but some are limited to, e.g. to licensable COTS (Commercial Off The Shelf) software and commercial product licensing is only a small (but necessary) part of Application Assurance. Holistic Asset Management of the assets necessary to the delivery of a Business Outcome is required and this may (depending on where you put the man-machine boundary) include human assets and third-party services. From a software application point of view, COTS, in-house developed and Open Source software must all be included. And, relationships between assets (which applications support which Business Outcomes, for example; or which people are responsible for which applications and so on) are a vital part of Application Asset Management. This is sometimes known as “Configuration Management” but that term has several interpretations in practice, some quite limited.

Application Classification

You need to classify applications in business terms, so that you can address applications within a business structure and all stakeholders can communicate with each other, without confusion. As well as business area, classification might include risk, location (Cloud or datacentre, team responsible etc.).

Application Prioritisation

Resources are always going to be constrained so it is important to prioritise applications in terms of their existential importance to the business. All businesses are regulated to some extent and applications that would stop you legally doing business (such as breaking privacy regulations – GDPR, for example) must rank highly, followed by core business applications. This prioritisation must be across all stakeholders and is really a board-level strategic decision, although doing it will be delegated.

Resilience is part of Application Assurance and there is an interesting crossover with business continuity planning here. When you are deciding which applications must be available after a contingency, you are also, in effect, prioritising applications for application assurance resources – as long as the people involved are all in communication.

Application Validation

To say that manual testing is a dead-end, in today’s world of large-scale, asynchronous, cloud-based, Agile applications is to state the obvious. Whatever you think of last-century transaction-processing database applications, where failing units of work were backed out before any other application could be affected, they were comparatively easy to test. These days, a business transaction that is ultimately abandoned may post intermediate results that are acted on by other applications, that may even make payments or ship goods to third parties as a result – which makes undoing abandoned transactions rather complicated.

Error handling, transaction repudiation or removal, fraud detection and the like may well require more logic – application code – than a straightforward successful business transaction with an honest third party, and this “bad case” code (and the associated application behaviour) must be validated. This is often done rather cursorily, because the proportion of successful transactions is, hopefully, much higher – which is why “bad cases” are the focus for fraudsters and other criminals.

Many of the technologies used in Application Validation are described in this Bloor Application Quality Assurance Hyper Report. Don’t limit your validation to the application under test, as its impact on rather applications matters: not just the scope of impact of failure, but also the impact of poor performance. And, don’t see validation as a one-time thing, as continued application monitoring can facilitate continual improvement.

With a DevOps pipeline, test and validation automation is the norm. Since tests are run manually, but run by software as part of the pipeline every time something changes, tests don’t get overlooked and past errors don’t creep back in. Increasingly, AI and ML are being used to recognise potential behaviour problems and known coding errors and fix them before developers even notice them (but they should still be reported, so developers don’t become complacent and deskilled).

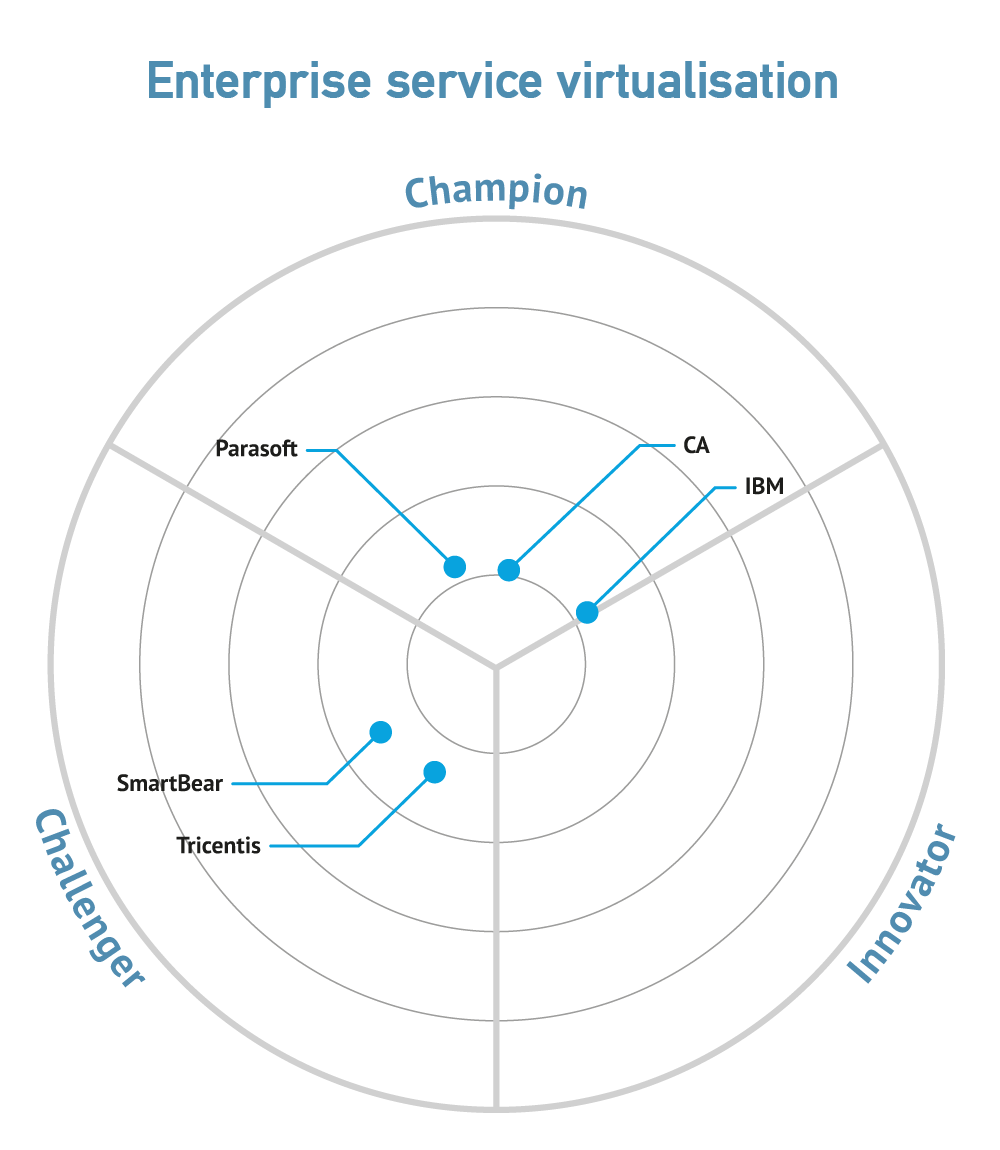

Service virtualisation

In order to allow a DevOps pipeline, a prototype service or even a full Digital Twin to work in a real world environment, Service Virtualisation (for services not yet available or not licenced for non-production use) services behind some of the APIs may need to be virtualised.

Service virtualisation is a technique which allows you to develop and test without regard to the stability or availability of services that you depend on. In effect, use of service virtualisation software allows you to “pretend” that these services are available and stable. There are various techniques that you can use for this purpose: record and playback, creating dummy services, creating echo stubbed responses and so on.

In the ultimate, sophisticated service virtualisation evolves into something almost indistinguishable from Digital Twin.

A plea here, for developers to put aside their egos and to use every appropriate compiler switch to check for things like out of bounds arrays, and to subject all code to static and dynamic analysis (of course, if you use a lo- or no- code solution, or orchestrate third party components, you should be able to assume that this has been done – but check anyway). Static code analysis, e.g., doesn’t find all errors, but it can find a lot of annoying potential bugs very quickly and cheaply – and can find unreachable or “commented out” code, which is the bane of basic Application Assurance (because it effectively hasn’t ever been tested and other errors may start it executing).

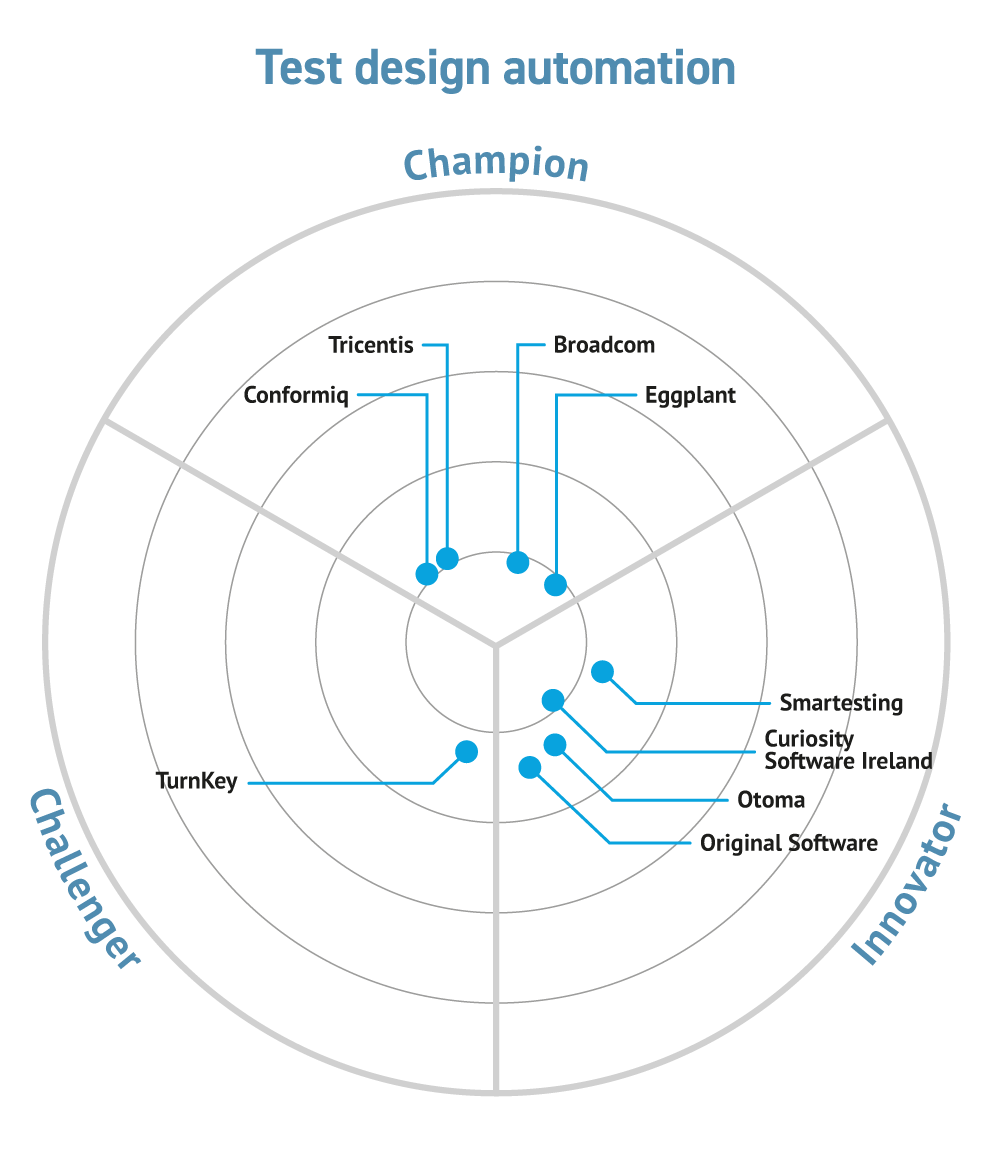

There is a lot of potential overlap between Outcome Automation and Application Assurance, basically because the same tools can be used for both. Testing a program simply involves writing another program to do the testing. In fact, some vendors are repurposing their Test Design Automation tools, which simply use a flowchart or other system model to automatically create tests, as Robotic Process Automation citizen developer tools.

Security

Good security (basically, access controls and restriction of function inline with the company security policy) should be a priority and built in from the first. It shouldn’t really be a separate section, as it should underlay everything. Nevertheless, this section does give us an opportunity to underline this.

However, don’t simply rely on the developers (they have an important part to play, but may make assumptions based on their current environment and experience). Consider the use of external security specialists and penetration testers – but establishing Trust with these matters.

Encryption is an important enabler of Security (and consider it both at rest, in storage; and in motion, on the network). But it doesn’t deliver security of itself (who do you give the keys to, and why; what algorithm should you choose). Encryption coding is a specialist area, don’t try DIY unless you have trained, experienced, programmers in-house.

Data (and applications) have to be decrypted for use, so Network and Endpoint security is important.

And, since Bloor believes that Data is fundamental to business automation, pay particular attention to Information Governance and Data Security.

Don’t overlook Privacy issues with Application Assurance – they matter, not just for legal reasons but because of the reputation risk implications. Loss of data from a test environment may be as embarrassing as loosing it from a production system. Data Masking is often used to protect the privacy of organisations or people in test data, but this is non-trivial (for instance, a major employer in a particular town or a person with unique characteristics may be easy to recognise in a dataset, even if their names etc. are masked).

Anybody who depends on Business Outcomes – that is, any stakeholder, should be interested in Application Assurance, from the CEO down.

The maintenance of quality in Outcome Automation – Application Assurance – is vital because, although desired changes can be implemented at speed across an organisation, a misunderstanding of the business process or regulatory requirements can propagate equally quickly.

The old component-based programming concepts of, for example, (low) Coupling and (high) Cohesion are still important to Application Assurance but their scope is greater. Even with Agile practices and no-code environment, it is still possible to write bad apps, just harder. If a component called “just total up all outstanding balances” also handles, say, opening new accounts, the application will likely be, or become, unmaintainable. This also applies to business applications and Business Outcomes as a whole. At some level of description, they should do one thing and one thing only, independently of other outcomes.

There are four key emerging trends in Application Assurance:

- Wider scope – all stakeholders should be able to gain appropriate Application Assurance;

- Elimination of silo boundaries;

- “Shift Left” – design for assurance as early as possible;

- Increased use of explainable Augmented Intelligence (AI) and Machine Learning to provide Application Assurance as systems become more complicated and/or more complex (“complicated” is often a consequence of bad design or organisational constraints; “complex” is a fundamental property of essential business logic).

We think that major analytics and service companies will increasingly acquire or develop Application Assurance capabilities, possibly by partnering with other companies.

Most of the major players – including BMC, Broadcom, IBM, Microsoft and Rocket – are expanding their Application Assurance capabilities. Increasingly, these have a company-wide focus, and are not just limited to a particular technology silo.

Daniel Howard has reported on BMC Compuware assurance tools that bring the mainframe into the party, but BMC is not unique in this.

We have been particularly impressed by Perforce’s overall capabilities and its use of AI for assurance of application behaviour.

Nevertheless, although all these tools are on a continual improvement journey, none of them quite offer an overall Business Outcome perspective yet; and some are still focussed on code instead of on Business Outcomes.

Related Blog

Related Research

- Keysight Generator: AI-Augmented Testing powered by Generative AI

- The importance of assuring the digital experience in delivering business services

- Modernisation with modern tools empowers you to do more with less

- Otoma Intelligent Test Design

- Modernisation with modern tools empowers you to do more with less

- DevOps Automation and Evolution

- Testim Automate

- Original Software TestDrive

- Tricentis Tosca

- Conformiq Creator and Transformer

- TurnKey Solutions Test Design Automation

- Curiosity Software Ireland Test Modeller

- Smartesting Yest and Orbiter

- Broadcom Agile Requirements Designer

Related Companies

Connect with Us

Ready to Get Started

Learn how Bloor Research can support your organization’s journey toward a smarter, more secure future."

Connect with us Join Our Community