Software testing is a diverse area of activity with literally thousands of companies providing tools to address specific testing situations. One of the leading ones is Keysight. Its primary focus is on electronic design, test, and measurement solutions for various industries, and it has distinguished itself by being the first company in its field to implement Gen AI within its product set.

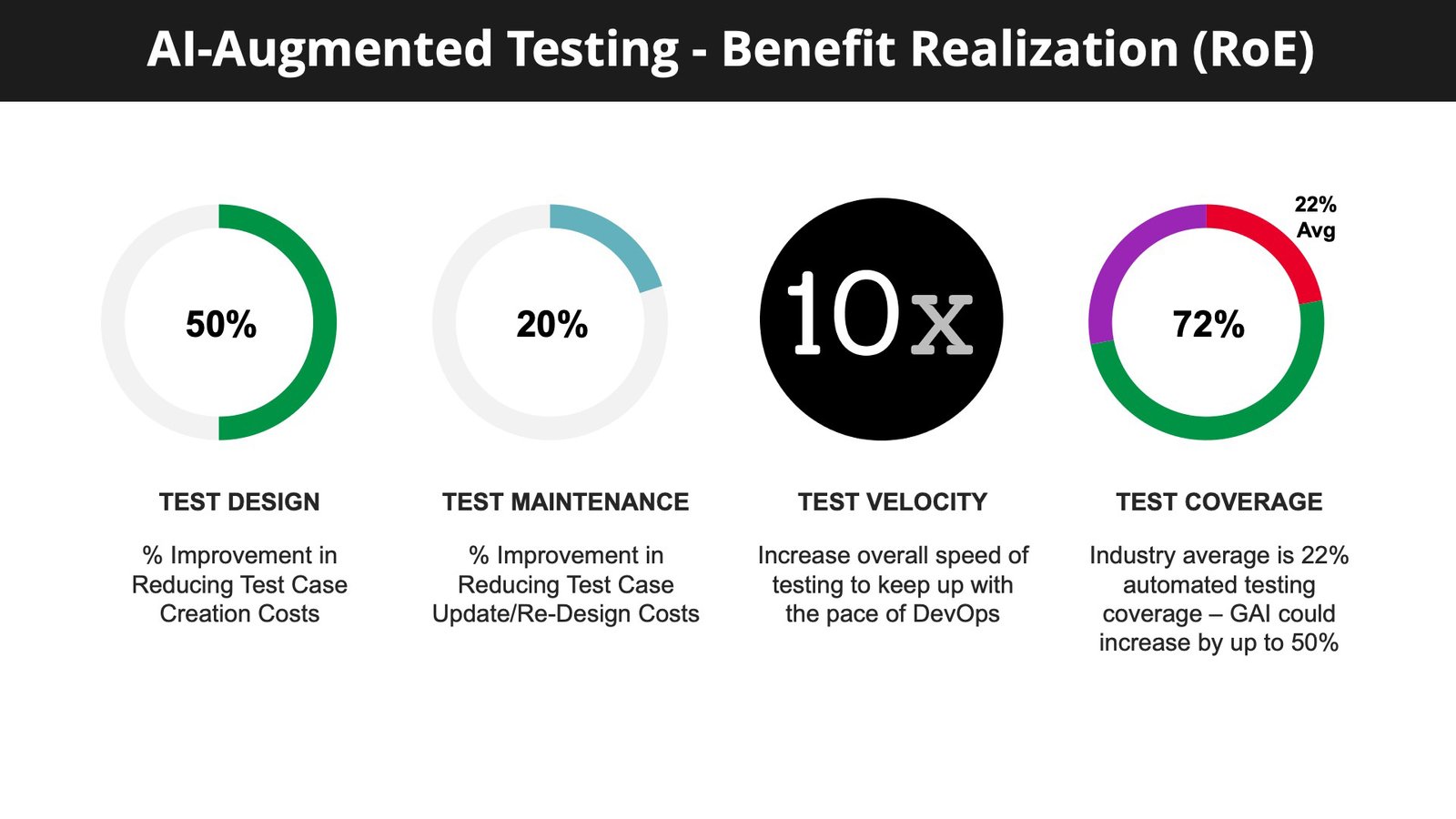

The Keysight product set is deep and broad and in this blog we’ll focus primarily on what Keysight has done in the area of requirements verification. However, before we do so it is necessary to point out that Keysight has done considerable work on the application of Gen AI to testing, as the following Keysight slide illustrates:

The realization of benefits from Gen AI shown here are startling: In Test Design a 50% reduction in Test Case Creation Costs, In Test Maintenance a 20% improvement in Test Case Update/Redesign Costs, In the speed of testing a 10x improvement and in test coverage an increase of about 50%.

These are exciting possibilities and Keysight is producing Gen AI modules to realize these remarkable improvements. But let’s now focus on requirements verification.

These are exciting possibilities and Keysight is producing Gen AI modules to realize these remarkable improvements. But let’s now focus on requirements verification.

The Description of a System

Since the invention of the waterfall development model, back in the prehistory of IT, there has always been a large cost difference between discovering an error during requirements specification and finding it at implementation. The ratio is usually quoted as roughly 1000 to one. So with Gen AI it makes sense to focus on requirements testing as an area where significant productivity gains are possible. It looks like a potentially good area of application simply because requirements are expressed in human language and that is Gen AI’s field.

One way of looking a software development is to regard every step forward as refining or improving part of the “description of a system”. The requirements specification is a description of a system, the modeling work creates a description of a system, the development work contains a description of a system, and ultimately, the completed product embodies the description of a system right down to the finest detail. And, of course, there may still be errors in that description.

Now, while the original planners of the system may have believed that they knew exactly how the system needed to behave, that was an assumption, and the final behavior of the system usually proves to be somewhat different – as it encounters reality.

Each error made produces a ripple effect, and impacts other areas, later leading to rework across multiple stages of the project. So catching errors early is the key, and requirements specification is the best point to catch errors.

Keysight’s LLMs

Keysight has built LLM-based solutions for multiple areas of the testing cycle. Each has its own contexts, but in general the Keysight quickly discounted the possibility of using any public LLM as the risk of leaking corporate intellectual property made it a non-starter. Also it wished the solutions it built to be relatively inexpensive. Like most companies building internal LLMs, Keysight went for a RAG-based solution.

The point here, if you were not aware, is that vanilla LLMs suffer from providing hallucinated (i.e. wrong) answers, so a technique has been built which blends a knowledge-base with the LLM so that the LLM consults the knowledgebase when producing a response and in doing so provides references to where it acquired its information. Nowadays, almost all private LLMs have some kind of RAG-based architecture. (RAG stands for Retrieval-Augmented Generation).

Keysight took examples of formal requirements specification standards and, for practical reasons, fed them through to generate Gherkin (a plain text specification language) into the LLM. Further transformations are created into the programming languages SenseTalk and Python to produce model building and coding output.

A representation of what this looks like in provided by the following slide:

At the top of the illustration on the left-hand side we see a set of requirements as input to the Gen AI Engine. The input is first translated into a set of automation scripts. The second translation can either generate program code (in Python) or natural language output for modeling. The screen at the bottom illustrates what the user sees. It is worth pointing out the Multimodal Gen AI Engines uses a Mixture of Experts for Large Vision-Language Models (MoE-LLaVA) each for different purposes such as for language interoperation, vision for screen identification and code generation.

At the top of the illustration on the left-hand side we see a set of requirements as input to the Gen AI Engine. The input is first translated into a set of automation scripts. The second translation can either generate program code (in Python) or natural language output for modeling. The screen at the bottom illustrates what the user sees. It is worth pointing out the Multimodal Gen AI Engines uses a Mixture of Experts for Large Vision-Language Models (MoE-LLaVA) each for different purposes such as for language interoperation, vision for screen identification and code generation.

As shown the engine will run on a laptop – make it the latest processor and provide 16 GB of memory – and currently it will run slow. It may take, say three hours to process 10gb of information. But never mind, when you think of what it is doing and how fast it is getting done, you’ll probably be happy to drink a few coffees and get on with your email (on another device by the way).

The bottom line

Keysight has developed so much with Gen AI, that it is impossible to capture it all in a single blog. It would require a long paper.

Across the IT industry businesses are grasping the Gen AI nettle and engineering it into their products. Testing seems to be an excellent area, perhaps even the best area for its application – after all many of the errors that occur within the development and maintenance life-cycle stem from the use of language. If Gen AI cannot make a big impact here, then where?

And those who currently have the need to accelerate their testing activity should take a close look at what Keysight has done.