Machine Learning and Artificial Intelligence

Analyst Coverage:

At its simplest it is using computers to run algorithms that give them the ability to undertake reasoning that was previously seen as the preserve of humans. This can be directed by human intervention, or undirected, where the data drives the analysis. As the level of direct human intervention decreases this is what we all understand by artificial (or augmented) intelligence: machines coming up with better answers than humans in far less time.

Quotes:

“A baby learns to crawl, walk and then run. We are at the crawling stage when it comes to applying machine learning.”

Dave Waters

“Artificial intelligence, deep learning, machine learning – whatever you are doing if you do not understand it – learn it! Because otherwise you’re going to be a dinosaur in 3 years.”

Mark Cuban

“Machine Intelligence is the last invention that humanity will ever need to make.”

Nick Bostron

Anything that can be defined by an algorithm can then be run on a machine, leveraging data to refine its understanding of what to do, be that cropping a photograph and other relatively niche skills, to the more mainstream. Where we come across machine learning the most today is in the application of statistical analysis. Here the basis of much of what we see is based on two well known statistical approaches: prediction and clustering. The former uses a set of input variables to predict outcomes, for instance in finance loans, in property house prices, in law court outcomes and in health diagnosis. For Clustering, the machine looks at all of the variables in a given population and will divide this into groups whose members have more in common with each other than they do with those in other groupings. This could be used, for example, by a mobile phone company wishing to run a call stimulation campaign to identify key groups for whom targeted messages should resonate.

These techniques are well known, but the advantage that machine learning brings is the speed at which it arrives at a solution. Models in the past that were hand cranked could take weeks to build and refine. Once built it could then take further days and weeks to establish the reliability and trustworthiness of the model with respect to operational as opposed to training data. With machine learning what was a process that took weeks if not months, can be done in hours. Because the speed is so great, rather than sampling data and doing things like discarding outlier data as being statistically insignificant, the whole data set can be used as input. Machine learning not only presents the prediction more quickly, it will also calculate the statistical validity of the result (how reliable it is), thus transforming how quickly a final answer can be arrived at.

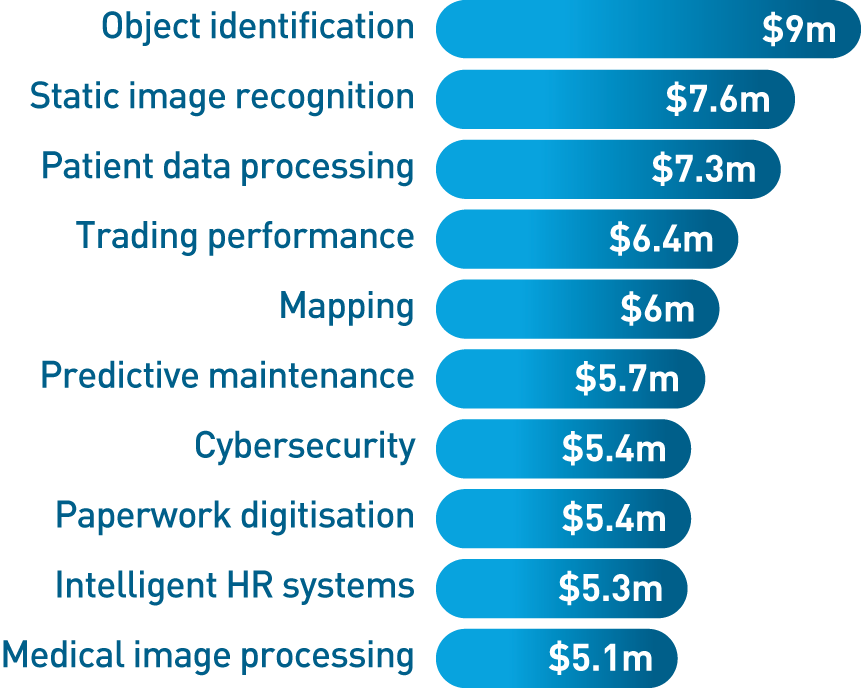

Figure 2 – Top Ten Use Cases 2016-2025 (Source: Tractica)

This does sound almost too good to be true, and this is reflected in the huge wave of hyperbole that is surrounding machine learning and AI at present. However, it must be remembered that the process is being driven by the data, so the quality of the data is paramount to determining the quality of the final result. There is no short cut to the issue of requiring good well governed data, to apply machine learning to data of dubious quality will result in misleading results. Inadequate data being used to train a model, even a simple model, will result in the model reflecting the training set’s data faults or biases, resulting in a model which will be unreliable when presented with real world facts.

Machine learning has moved from being a niche tool in the BI armoury to the centre stage. IBM with Watson did much to popularise its capability, and now IBM sells machine learning even as an add-on to its mainframe range. All of the mainstream BI vendors such as SAS and Teradata are significant players, the Business Intelligence and data science platforms from TensorFlow, to Pentaho to Rapidminer to TIBCO and beyond are all embracing it, as are specialist vendors such as DataRobot and Dataiku. The Cloud is a natural home for its use because of the speed, storage capability and affordability it brings, so key players include Amazon machine learning, Google Cloud Machine Learning, and Microsoft Azure Machine Learning. For many in the Cloud the most common platform is associated with Spark and the OpenSource Library MLLib which provides all of the functionality for machine learning. So essentially machine learning is now ubiquitous.

If all of the vendors are now embracing it what is the business justification? Firstly whilst 46 percent of companies take over a year to see an ROI from their BI investments, insights driven businesses show a growth 8 to 10 times faster than the market and the ROI is expected to grow to $2.87 for every dollar spent over the next ten years. Part of the explanation for this, is that with machine learning all of your data can be exploited for insight purposes, as opposed to the approximately 20% that is commonplace today. That greater use of data to drive actionable insight is expected to increase revenues through product innovation (new products that match real customer need), improved customer service, an enhanced supply chain, reduced exposure to fraud (including cyber attacks). It is also expected to transform compliance, auditing and reporting.

Already the use of machine learning, combined with the Internet of Things, embedded and edge analytics is changing how the maintenance of expensive infrastructure is managed, avoiding downtime, and reducing cost. For instance, Thyssen Krupp Elevators now have possible errors captured by lifts, analysed, then engineers prompted to take relevant action. The end result is dramatically reduced downtime, happier customers, reduced costs and a system which the company feels will only get better as the machine learning improves over time.

What is the bottom line?

Machine learning is in its infancy. Over time it will become more powerful, and we will learn to exploit it in new ways. Delaying an implementation of machine learning will be a commercial threat, whilst engagement will drive the transformation to an insight driven future.

Related Blog

- Generative AI – case studies and limitations

- Generative AI – is it really intelligent?

- BigPanda announces Generative AI capabilities

- Puppet 8 for DevOps Engineers

- Why you should take Apple Vision Pro seriously

- Would you put your data in the hands of an AI?

- Intelligent Continuous Delivery

- Where to, DevOps?

- Informatica Data Quality Offering

- Curiosity about Testing

- Observations from Tech Show London 2023

- Integrating test automation with a legacy environment

- 2023 is the year to Get Real About The Metaverse

- Conversational AI with Druid and ChatGPT

- A trusted AI platform

Related Research

Connect with Us

Ready to Get Started

Learn how Bloor Research can support your organization’s journey toward a smarter, more secure future."

Connect with us Join Our Community