Experian Data Quality

Update solution on July 14, 2014

Experian Data Quality provides a number of different product offerings: data capture and validation solutions; data cleansing and standardisation; data matching and deduplication; and the Data Quality Platform.

The data capture and validation solutions, which are often embedded in web-based applications, provide validation capabilities not just for physical addresses but also for email addresses and mobile phone numbers. The company’s data cleansing solution, QAS Batch, is a batch-processing application for cleansing, standardising and enriching data. Thirdly, there is QAS Unify which provides a matching engine and rules-based deduplication.

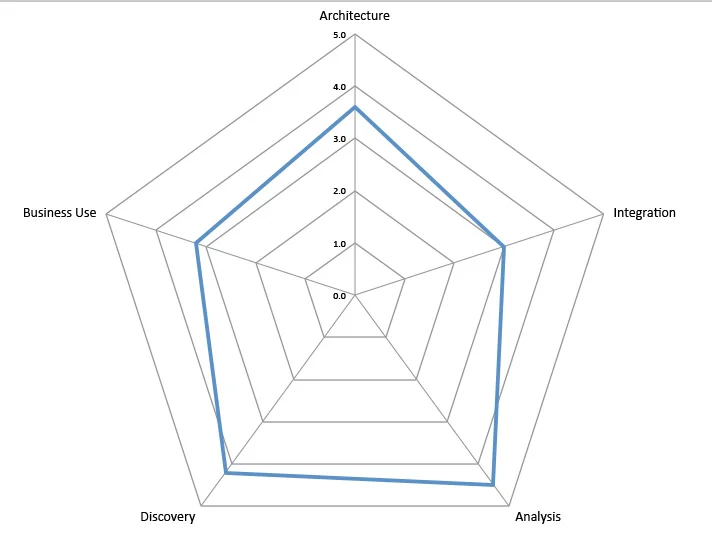

The Data Quality Platform has three main areas of functionality. Firstly, it provides data profiling and discovery capability. Secondly, it provides prototyping, which allows you to use a graphical rule builder to cleanse and transform your data interactively, the sort of operations that are normally associated with both ETL (extract, transform and load) tools and data cleansing tools, thus creating a ‘prototype’ of the data you need and generating a specification of what you did to produce it. Thirdly, the Data Quality Platform can be used to instantiate these rules so that the product may be used for data quality and data governance purposes as well as for data migrations. In this last case this is enabled by the fact that the product can generate load files that can be used in conjunction with native application and database loaders. In other words, it enables data migrations without the need for an ETL tool.

Experian has an extensive direct sales force and also a significant partner base. The company’s name change, along with the introduction of the Data Quality Platform, marks a change in direction for the company. Historically it has been a market leader in the name and address space but it wants to move away from party data into the more general data quality market.

Experian Data Quality has some 9,000 users around the world for QAS Batch, QAS Unify and the company’s capture and validation services. The Data Quality Platform has, in fact, been white-labelled from another vendor, and although Experian has only very few customers for this product as yet, there are approaching 500 using the original product. Needless to say, Experian has not only integrated its other products with the Data Quality Platform but is also working to extend the product.

The Data Quality Platform is a Java software product that runs under Windows (client and server), Linux (Red Hat and SUSE) as well as Solaris, AIX and HP-UX. Other Java-compliant platforms are available upon request. The product supports JDBC for database connectivity as well as offering support for both flat files and Microsoft Excel. However it does not provide support for non-relational databases (including NoSQL sources). The product is underpinned by a proprietary correlation database. This stores data based on unique values (each value is stored just once) rather than tables or columns. This means that it uses less disk space than traditional databases, as well as enabling unique functionality and improving performance. As an indication of the latter, the Data Quality Platform supports as many as two billion records on-screen with full browsing and filtering capabilities. Another advantage that derives from having its own database is that there is no need to embed a third party database engine within it, so there are no bugs, compatibility, administration or performance issues related to that. As far as functionality is concerned, the product can distinguish all (sub)types of data and one particularly interesting feature is the ability to assign monetary weightings during on-going monitoring. This is useful for justifying and prioritising remediation.

Another major feature is that it supports prototyping of the sort of business rules that are used within a data quality context or transformation rules within a data migration environment. In the latter case the product supports the generation of ETL (extract, transform and load) specifications and can be used as a standalone solution for data migration. The big advantage for the Experian technology set is that you don’t need a separate ETL tool with its associated staff, infrastructure and project timescales.

More generally, the Data Quality Platform supports full cleansing, enrichment and de-duplication using reference data, patterns, synonyms, fuzzy matching and parsing via over 300 native functions and any number of customer-specified functions. Functions can also be called by external applications via the REST API, allowing enterprise-wide re-use. It is also very flexible with respect to both data and metadata and supports customisations such as the construction of a business glossary associated with data assets. The product lacks support for external authentication mechanisms such as Active Directory or LDAP, using its own role-base security instead.

Experian Data Quality has a significant services business, both in the UK and USA. As an example, the business is worth around £3m in revenues in the UK. In addition to training, support and so forth, this division offers integration services for the company’s products, data strategy services, and what might be called bureau services for off-site, one-off cleansing initiatives.

Related Company

Connect with Us

Ready to Get Started

Learn how Bloor Research can support your organization’s journey toward a smarter, more secure future."

Connect with us Join Our Community