Data Virtualisation

Last Updated:

Analyst Coverage: Philip Howard

This page has been archived, please visit the Data Movement page for related content.

Data virtualisation makes all data, regardless of where it is located and regardless of what format it is in, look as if it is one place and in a consistent format. Note that this is not necessarily ‘your’ data: it may also include data held by partners or data on web sites, and it may be data that is on premise or it may be data in the Cloud. Also bear in mind that when we say “regardless of format” we literally mean that, so we would include relational data, data in Hadoop, XML and JSON documents, flat file data, spreadsheet data and so on.

Given that you have all of this data looking as if it is in a consistent format you can then easily merge that data into applications or queries without physically moving said data. This may be important where you do not own the data or when you cannot move it for security reasons, or simply because it would be too expensive to physically move the data. Thus data virtualisation supports the concept of data federation (query across multiple heterogeneous platforms) as well as mash-ups.

This page has been archived, please visit the Data Movement page for related content.

- Data Virtualisation virtualises data – it makes all relevant data sources, including databases, web content and application environments, appear as if they were in one place so that you can access them as such.

- It abstracts data – that is to say, it presents the data to interrogating applications in a consistent fashion regardless of any native structure and syntax that may be in use in the underlying data sources.

- It federates data – it allows you to pull data together from diverse, heterogeneous sources (which may contain either operational or historical data or both) and present that in a holistic manner, while maintaining appropriate security measures.

- It presents data in a consistent format to the front-end application either through relational views (via SQL) or by means of web services.

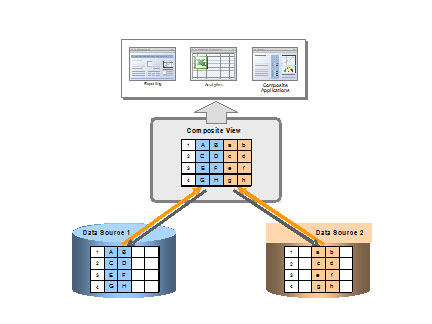

Data Virtualisation, Figure 1

Figure 1 illustrates the approach being used, though note that the sources do not have to be relational and the term “composite” is used generically rather than to refer to the vendor that used to have that name.

This page has been archived, please visit the Data Movement page for related content.

Most companies have silos of information and it is often the case that you would like to join this piece of information in this silo with that piece of information in that silo. You could consolidate the environments but that would be prohibitively costly; you could hard code integration between the relevant data elements but that would be inflexible and you’d have to do it over and over again whenever the issue arose again. There are other possibilities but none of them are as flexible or cost effective as data virtualisation. Thus it is CIOs and CFOs who should care about data virtualisation because it will enable them to meet business user requests more easily.

Because it is relatively quick to deploy, data virtualisation is also useful for prototyping purposes: to show users what the potential application will look like prior to actually building it, thus avoiding specification mismatch issues. This means that developers should care about it. It is often the case when prototyping in this way that the prototype ends up going into production, even if this was not the original intention (there is nothing wrong with this, but making sure that the prototype is being used within the scope originally envisaged is a potential governance issue).

This page has been archived, please visit the Data Movement page for related content.

Data virtualisation is increasingly recognised as a key part of the data warehousing landscape as well as for other uses. In particular, it will be a major element within the move towards logical data warehousing, though it is not the only technology that will be required for that purpose. In addition, as database vendors move further towards supporting multiple storage engines, database optimisers will increasingly take on some of the characteristics of data virtualisation.

More generally, the trends are towards better performance and increased integration. In the former case, caching is a major focus as well as push-down optimisation. In so far as integration is concerned this is now pushing into the big data space, with support for platforms such as Hadoop and, in some cases, support for SPARQL (the query language for RDF stores and graph databases).

This page has been archived, please visit the Data Movement page for related content.

IBM, which started this market with what was then called DB2 DataJoiner, continues to lag behind the capabilities of other vendors in the market (largely due to lack of investment while the product was in the WebSphere portfolio) and the company has recently introduced a parthership with Denodo.

Note that business intelligence vendors such as SAP Business Objects have data federation capabilities built into their query environment but they are not data virtualisation vendors per se. Most recently, Cisco has acquired Composite Software, leaving Denodo as the market-leading pure play vendor in this space. Red Hat, after an absence of some years, is becoming active again with what used to be MetaMatrix and is now JBoss Data Virtualization while Attunity has moved in the opposite direction and is no longer marketing its federation product.

Commentary

Solutions

These organisations are also known to offer solutions:

- Capsenta

- Cirro

- Cisco Systems

- Denodo

- IBM

- Informatica

- Oracle

- Queplix

- SAS

Research

Coming soon.