Options for analytic databases and data warehouses (2022)

2022 Bloor, All Rights Reserved.

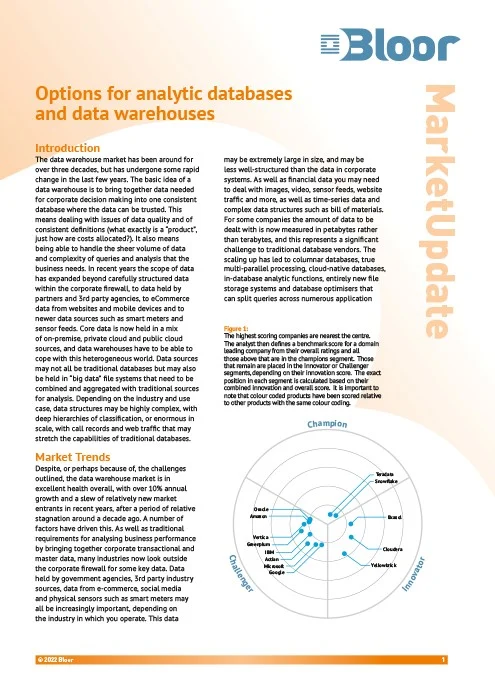

Classification

The data warehouse market has been around for over three decades, but has undergone some rapid change in the last few years. The basic idea of a data warehouse is to bring together data needed for corporate decision making into one consistent database where the data can be trusted. This means dealing with issues of data quality and of consistent definitions (what exactly is a “product”, just how are costs allocated?). It also means being able to handle the sheer volume of data and complexity of queries and analysis that the business needs.

In recent years the scope of data has expanded beyond carefully structured data within the corporate firewall, to data held by partners and third party agencies, to eCommerce data from websites and mobile devices and to newer data sources such as smart meters and sensor feeds. Core data is now held in a mix of on-premise, private cloud and public cloud sources, and data warehouses have to be able to cope with this heterogeneous world. Data sources may not all be traditional databases but may also be held in “big data” file systems that need to be combined and aggregated with traditional sources for analysis.

Depending on the industry and use case, data structures may be highly complex, with deep hierarchies of classification, or enormous in scale, with call records and web traffic that may stretch the capabilities of traditional databases.

Research By

Related Technologies

Connect with Us

Ready to Get Started

Learn how Bloor Research can support your organization’s journey toward a smarter, more secure future."

Connect with us Join Our Community