Data Catalogues

Last Updated:

Analyst Coverage: Philip Howard

This page has been archived and merged, please visit the Data Discovery and Catalogues page for new content.

Put simply, a Data Catalogue is a repository of information about a company’s data assets: what data is held, what format it is in, within which (business) domains that data is relevant, and where it is is collected automatically and (in which databases and/or files). The information within a Data Catalogue may be classified further by geography, time, access control (who can see the data) and so on. Data Catalogues are indexed and searchable, and support self-service and collaboration.

Why is it important (hot)?

Designed originally to work in conjunction with data lakes, today’s data catalogues can span multiple data sources (relational, NoSQL and others) and they help to eliminate silos of data. Thus, users potentially have access to all information across the organisation and are not limited by the location of any data they are interested in. Secondly, catalogues enable self-service and, thereby, productivity. They allow business users – with appropriate permissions – to search for information they are focused on, without recourse to IT. And thirdly, to find information much more quickly: not wasting inordinate amounts of time searching for data. Finally, given the deluge of data that many companies are being overwhelmed by, catalogues help to make sense of all this by providing some order and structure to the environment, so that users can see what data is relevant and what is not.

Figure 1 – Amount of time spent by different user groups on different data activities.

How do they work?

Data Catalogues can be created in a similar manner to the way the Google provides a “catalogue” of web documents by using web spiders or other technologies to create a fully searchable experience. Business specific terminology can be derived from business glossaries.

While Data Cataloguing tools can discover, for example, geographical details pertaining to a data asset, they cannot determine the relevance of that information. For that, a user will need to define the level at which geography is important: by town, state, country or region, for example. So, some manual intervention will be required. This may also be true where what you can discover about an asset is not clear cut. In-built machine learning will be useful for classification purposes so that automated assignment of data improves over time, reducing the need for manual input.

To improve the quality of the catalogue “crowd sourcing” allows users to tag, comment upon or otherwise annotate data in the catalogue. Some products support the ability for users to add star ratings as to the usefulness of a data asset. The software will monitor who accesses what data and employs it in which reports, models or processes. If a user starts to search against the catalogue for a particular term, the software will make suggestions to the user about related data that other users have also looked at in conjunction with that term. Catalogues can also be useful in identifying redundant, out-of-date and trivial (ROTten) data that should be removed from the database.

This page has been archived and merged, please visit the Data Discovery and Catalogues page for new content.

In little more than two years since the current crop of data catalogues were first introduced and they have been already adopted by many leading companies, including GE, Pfizer, LinkedIn, Tesco and eBay, to name just a few. As the quotes in this Hot Report illustrate, the use of data catalogues can substantially reduce – from months to days – the time it takes to find and analyse data. According to a recent Gartner Research Circle survey on data and analytics trends, “data risk and information governance” and “deriving value from data” – both of which data catalogues help to resolve – were the second (34%) and third (33%) most common challenges faced by organisations in their data and analytics programmes.

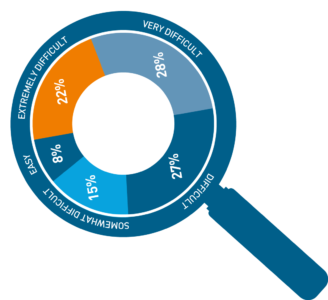

Figure 2 – A survey by Dresner Advisory services found that 50% of respondents felt it was “very difficult” or “extremely difficult” to find relevant data and only 8% found it “easy”.

Quotes

“A core-piece in our strategy is our integrated self-service data analytics plat-form. The data catalogue is part of that platform and already helps more than 600 users in the group to discover data easily and to share knowledge with each other. We expect time to insight to go down significantly.” Munich Re

“The cost and complexity of managing our data was spiralling out of control.” Data preparation supported by the data catalogue “has cut costs by 40% and accelerated IT delivery.” TD Bank

“8 months ago, it took our analysts 3 months to deliver data-driven business insights. Today, our team (using a data catalogue together with data preparation) gets answers in less than 2 days.” Astellas Pharma

This page has been archived and merged, please visit the Data Discovery and Catalogues page for new content.

A key issue is whether you see a data catalogue as a single capability that spans your enterprise and its resources, or whether you see it as an enabling technology that is embedded within other tools and products. Theoretically, the former would be a best practice approach, with all relevant tools interfacing to a single catalogue. This might be provided by one of the pure-play vendors or perhaps as part of a data governance solution where the catalogue integrates with, and leverages, the business glossary.

In practice, such a centralised solution is unlikely to be practical, and we expect to see organisations with multiple catalogues, not least because most enterprises have multiple business intelligence products in use, and virtually all of the vendors in this market (as well as suppliers of data preparation products) are embedding cataloguing capability.

Regardless of the number of data catalogues in use, you will want your catalogue(s) to integrate with both your business glossary and with any metadata management capabilities you have in place. Clearly, this will be more complex if you have multiple catalogues. A possible approach in this case would be to link catalogues by means of federated queries.

What is the bottom line?

If you care about analytics – and who doesn’t – then a data catalogue is a fundamental requirement for creating a data-driven business and supporting self-service.