IRI Data Quality and Improvement

Published:

Content Copyright © 2022 Bloor. All Rights Reserved.

Also posted on: Bloor blogs

It always bears repeating that being able to serve up high quality data is really important. This is partly because the consequences of poor data quality can be severe – misleading analytics, stymied processes, greater storage costs (due to duplicated data), and so on – but also because historically many organisations have not treated data quality as the priority it should be.

Since it is traditionally a negative sell (on the grounds that bad quality leads to bad outcomes, which is entirely correct but not terribly effective as a sales tactic) this is unsurprising, but still disappointing. In turn this means that data quality issues tend to be addressed reactively, which is to say, when they start causing problems that are so significant they can’t be ignored.

Negative sells are generally unappealing…until it’s too late

Of course, by this point data quality is normally so poor that the data that exists needs to be improved substantially just to get to a reasonable standard. Then that standard needs to be maintained to avoid history repeating itself. There are, fundamentally, two ways to do this.

First, and obviously, you can periodically check the quality of your data and remediate it when that quality dips. Secondly, you can improve your business processes in order to produce higher-quality data as the default. The latter could be through the explicit incorporation of data quality checking and remediation, or it could be a matter of building processes that are more robust and less error-prone. Most effective solutions will incorporate both of these techniques.

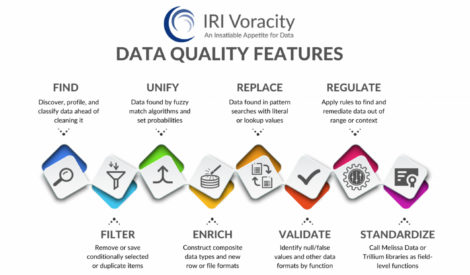

IRI provides both data quality and data improvement through its data management platform. The relevant capabilities available in IRI Voracity are shown in the image below, and are generally what you would expect from a competent data quality solution, incorporating discovery, remediation, deduplication and whatnot.

Data quality features in IRI Voracity

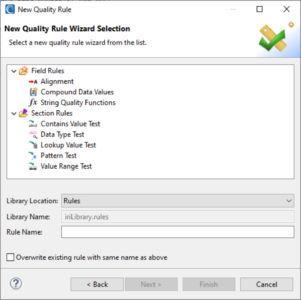

Notably, IRI Workbench – an Eclipse-based IDE and the platform’s GUI – supports a selection of data quality rules, including cleansing, enrichment and validation, to be used principally as part of IRI CoSort data transformations but which may also be used inside Voracity ETL, reporting and data wrangling jobs. This means you can use Voracity to build data quality into your business processes (your workflows, say) as described above.

Creating a new data quality rule in IRI Voracity

There are rules provided for all sorts of use cases, including both section- and field-level data quality, with the former providing tests that look for values, data types, patterns and so on within atomic data sources and targets (e.g., files and tables) and the latter allowing you to amend field contents when those tests fail.

IRI Workbench also offers a wizard for consolidating duplicate, or near-duplicate, data in databases and files. Various methods for identifying duplicates are provided, including both exact and fuzzy matching methods. Elsewhere in the platform, phonetic matching methods are also offered, meaning that you could figure out – for example – that a phone operator heard and recorded “John” when the customer said their name was “Joan”, then amend accordingly.

Data consolidation wizard in IRI Workbench

It’s also important to realise that CoSort data validation jobs (and Voracity jobs by extension) can also run off of template structures that define specific data types and formats, including custom patterns that can be used for reformatting and standardisation.

For our purposes this has two major advantages: it means that any new data will rigidly conform to its type’s template, and it makes it very easy for the platform to recognise when a piece of data has broken with its corresponding template and needs to be remediated. Format templates can be constructed in IRI Workbench, or scripted directly if you so choose. Moreover, templates are centralised and promote re-use.

Finally, we should also point out that IRI does not stand alone when it comes to data quality: in fact, it can invoke standardisation libraries supported by various third-party solutions – including Melissa Data and Trillium – at the field level within CoSort data transformation and reporting (and Voracity ETL and masking) jobs.

All in all, there are several ways to validate, enrich, and homogenise data in the Voracity ecosystem, either on an ad hoc or systemic basis, in order to sanitise data targets, improve the accuracy of analytics, and so on.